FINGERCODE FOR FINGERPRINT RECOGNITION IN

WAVELET TRANSFORM DOMAIN

JuCheng Yang, JinWook Shin, BungJun Min, Bin Yu, DongSun Park

Dept. of Infor.& Comm.Eng., Chonbuk National University, Jeonju, Jeonbuk, 561-756, Korea.

Keywords: Fingerprint, Recognition, FingerCode, Wavelet Transform.

Abstract: FingerCode has been an effective representation for both the local and global information in fingerprints

using their reference points. Wavelet transform is known to be a powerful tool for fingerprint enhancement

and features extraction. In this paper, a novel method for fingerprint recognition using the FingerCode in

wavelet transform domain is proposed. The proposed method includes a new reference point detection

method in sub-images of the wavelet transform. Since the proposed method can be used for both feature

extraction and pre-processing, conventional pre-processing algorithms can be eliminated from recognition

steps and hence, it lowers the overall computational complexity of the recognition. Experimental results

show that the proposed method is more accurate and reliable than a traditional FingerCode method.

1 INTRODUCTION

Traditionally, passwords (knowledge-based security)

and badges (token-based security) have been used to

restrict access to secure systems. However, security

can be easily breached in these systems when a

password is divulged to an unauthorized user or a

badge is stolen by an impostor. The emergence of

biometrics has addressed the problems that plague

traditional identification or verification systems by

using certain physiological or behavioural

characteristics associated with an intended person,

such as fingerprints, hand geometry, iris, retina,

face, hand vein, facial thermo grams, signature, and

voice-print. Biometric indicators provide uniqueness

and have an edge over traditional security methods

in that these attributes cannot be easily stolen or

shared. Among all the biometric indicators,

fingerprints has been proven as providing one of the

highest level of reliability and extensively used in

many applications, for example, in criminal

investigations by forensic experts.

Three types of matching methods have been used

for fingerprint recognition: correlation-based

matching methods, minutiae-based matching

methods (Jang and Yau, 2000 – Liu, et al., 2000),

and texture-based (filter-based) matching methods

(Jain, et al., 1999 - 2000, Sha, et al., 2003). In

correlation-based matching methods, two fingerprint

images for matching are superimposed and the

correlation between corresponding pixels is

computed for different alignments such as various

displacements and rotations. Since the fingerprint

representation coincides with the whole fingerprint

image, these methods are quite time-consuming.

The most popular and widely used techniques for

matching are minutiae-based matching methods.

Methods in this type extract feature vectors from the

two fingerprints as sets of points in the two-

dimensional plane. They essentially consist of

finding the alignment of minutiae points between the

template and the input sets that result in the

maximum number of minutiae pairing. However, a

minutiae-based matching may not fully utilize

significant components of the rich discriminatory

information available in the fingerprints and it

usually very time-consuming (Maltoni, et al., 2003).

The texture-based matching methods use features

from the fingerprint ridge pattern such as local

orientation and frequency, ridge shape, and texture

information, which can be extracted more reliably

than minutiae points. Filter-based matching is a type

of the texture-based matching. The FingerCode

(Jain, et al., 1999 – 2000) and its improved

algorithms (Sha, et al., 2003) are shown to provide

effective representations by extracting filtered

features from the fingerprint image.

Wavelet transform have been used for fingerprint

verification and recognition recently. Selvaraj et al

161

Yang J., Shin J., Min B., Yu B. and Park D. (2006).

FINGERCODE FOR FINGERPRINT RECOGNITION IN WAVELET TRANSFORM DOMAIN.

In Proceedings of the First International Conference on Computer Vision Theory and Applications, pages 161-165

DOI: 10.5220/0001369301610165

Copyright

c

SciTePress

(2003) proposed a method of matching between the

input image and the stored template without

resorting to exhaustive search using both the wavelet

statistical features and wavelet co-occurrence

features. However, it uses all the pixels in the

wavelet sub-band image for the computation of the

statistical features, and it is much time-consuming.

Tico et al. (2001) suggested another matching

algorithm using wavelet domain features. They used

a feature vector of length 12 to represent a

fingerprint image. The feature vector represents an

approximation of the image energy distribution over

different scales and orientations. Fung et al. (2004)

proposed an improved approach of ref (Tico, et al.

2001). In their work, critical wavelet coefficients

were selected to form a feature vector of a

fingerprint image. However, the vector with 12

features is not sufficient to use all the information of

a fingerprint image so that the recognition rate may

not be appropriate for some applications. Lee W.K.

et al. (1997) proposed an algorithm that extracts the

dominant local orientation features in the wavelet

transform domain. The performance of the

algorithm is directly related to the accuracy of the

detection of the local directions. Mokju et al. (2004)

proposed an algorithm based on directional image

constructed using the expanded Haar Wavelet

Transform. In the work, they first obtain a

directional image, and then quantize the directional

image into a few grey-level values that represent a

range of angle orientations. In this method, the

quantizing process may be error-prone in computing

the directional information.

To overcome the drawbacks of these methods, a

new matching method is proposed in this work. We

use a sophisticated FingerCode method in the

wavelet transform domain for fingerprint

recognition. In the work, FingerCode are extracted

in the decomposed wavelet sub-band images instead

of the original fingerprint image. There are two

advantages to extract features from the wavelet sub-

band images. Since the wavelet transform is a multi-

resolution tool in signal processing, it can easily

remove the high-frequency noise, usually contained

in HH sub-band image. With this approach one can

eliminate some pre-processing steps such as noise

removing, binarization, thinning and restoration. In

addition, the size of decomposed sub-band images is

half of the original image, so that a matching method

using features extracted from sub-band images can

speed up the whole matching process comparing to

other approaches.

The paper is organized as follows: In Section 2

The theory of FingerCode is briefly reviewed. The

proposed recognition method is explained in Section

3 and its experimental results are shown in Section 4.

The conclusion remarks are given in Section 5.

2 FINGERCODE

The FingerCode, introduced in ref (Jain et al., 2000),

is a fixed length representation that can effectively

capture both the local and global details in a

fingerprint, with a bank of Gabor filters. The typical

FingerCode generation process can be summarized

in the following steps:

1. Locate the reference point and determine the

region of interest for a fingerprint image.

2. Tessellate the region of interest, centered at the

reference point, into a series of B (=5) concentric

bands and divide each band into k (=16) sectors.

3. Normalize each sector to a predetermined

constant mean M

0

(=100) and variance V

0

(=100).

4. Filter the region of interest in eight different

directions using a bank of Gabor filters.

5. Computer the average absolute deviation from

the mean (AAD) of grey level values in each of the

80 sectors for every filtered image. The collection of

all the AAD features in each filtered image is

defined as FingerCode.

6. Rotate the features in the FingerCode cyclically

to generate five templates corresponding to five

rotations (±45

0

, ±22.5

0

, 0

0

) of the original fingerprint

image, thus to approximate the rotation-invariance;

7. Rotate the original fingerprint image by an

angle of 11.25

0

and generate its FingerCode.

Another five templates corresponding to five

rotations are generated in the same way as step 6.

8. Match the FingerCode of the input fingerprint

with each of the ten templates stored in the database

to obtain ten matching scores. The final matching

score is the minimum of the ten matching scores,

which corresponds to the best matching of the two

fingerprints.

In this paper, we use the reference point location

method developed in ref (Sha, et al., 2003) for the

original fingerprint image, which is known to be

robust and rotation-invariance. The average

orientation of each sector is also computed for the

reference point.

3 THE PROPOSED ALGORITHM

The proposed algorithm for the fingerprint

recognition consists of three main steps:

VISAPP 2006 - IMAGE UNDERSTANDING

162

1. Apply the discrete wavelet decomposition to a

fingerprint image.

2. Determine the reference point in the wavelet sub-

images

3. Apply the FingerCode approach to the wavelet

sub-images.

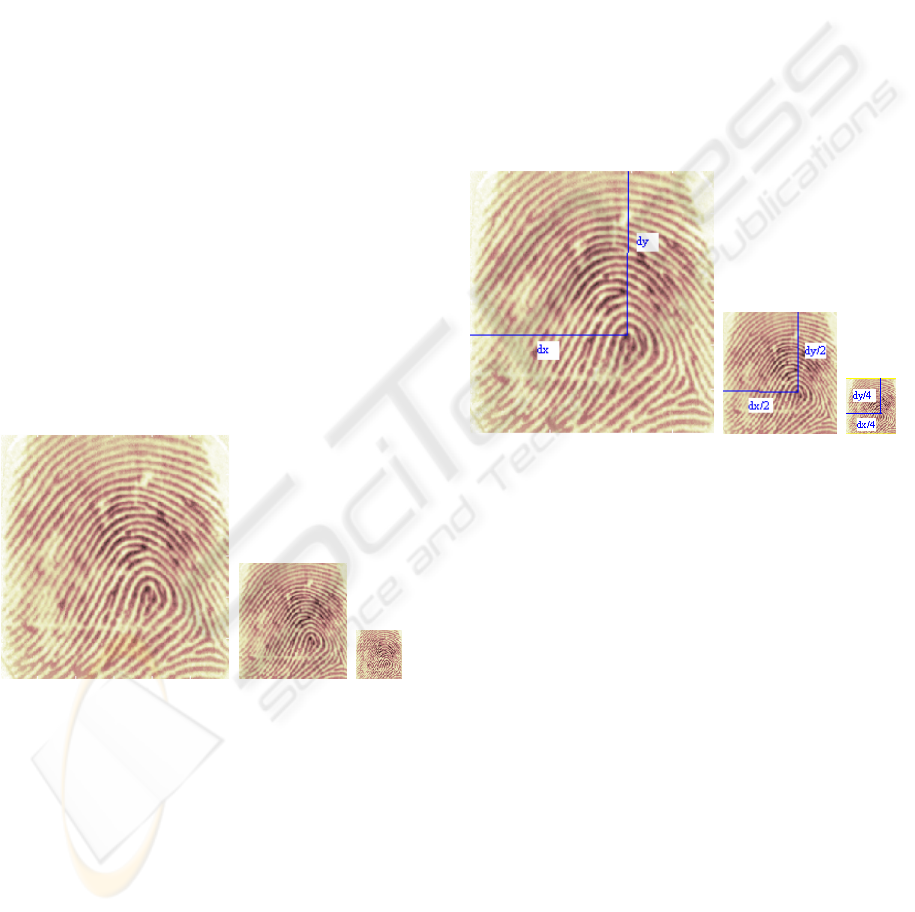

The first step is to apply the wavelet

decomposition. Typically, Daubechies wavelet

filters are reasonable tools for decomposing images

(Mallat, 1998), here for simplicity, Db4 is chosen as

the wavelet basis. We use Db4 wavelet to

decompose the fingerprint image into 2 levels, the

approximated sub-images LL1 and LL2 are shown

as in Fig.1. We choose the approximated sub-image

LL1 and LL2, and exclude LL3 or higher

decomposed sub-images, since the size of higher-

level decomposed sub-image is so small, and they

hardly provide unique information as FingerCode

features.

For the approximated sub-images, the ridges and

valleys may not be clearly defined due to the

approximation as in Fig. 1(b) and (c). Hence it is

very hard to find reference points directly from these

sub-band images using the traditional method. A

new reference point detection method in the wavelet

sub-images needs to be developed and described as

the second step of the proposed method.

(a) (b) (c)

Figure: 1. (a), (b), (c) original image 101_7.tif (300×300)

and its 1-level and 2-level decomposed sub-images LL1

and LL2 (Db4 wavelets used).

The second step is to determine the reference

points from the wavelet sub-images. In here we

proposed a new method based on the method in ref

(Sha, et al., 2003). Since it is difficult to determine

the reference point in sub-band images with less

information, we first determine the reference point

(dx, dy) in the original fingerprint image using the

method in ref (Sha, et al., 2003). Then the algorithm

finds the location of the reference point in the

wavelet sub-images using the proportional location

of the reference point in the original image. Since

the decomposition of wavelet transform uses down-

sampling in half, the size of the decomposed sub-

image is a half size of the up-level image. If we find

the coordinate of the reference point according to the

left-top point (0,0) is (dx, dy) in the original image,

then the coordinates of the reference point according

to its left-top point can estimated as (dx/2, dy/2) and

(dx/4,dy/4) in the LL1 and LL2 sub-images as

shown in Fig.2 (b),(c) respectively.

The third step is using the FingerCode on the

wavelet sub-images based on the method described

in ref (Sha, et al., 2003). Since we locate the

reference point in the wavelet domain, the

FingerCode for fingerprint recognition can be

straightforward.

(a) (b) (c)

Figure.2: (a), (b), (c) the detected reference point in the

original image 101_7.tif (300×300) and its 1-level and 2-

level decomposed sub-images LL1 and LL2 (Db4

wavelets used).

4 EXPERIMENTAL RESULTS

The fingerprint image database used in this work is

the database of FVC2004 (http://bias.csr.unibo.it

/fvc2004). Four distinct databases, provided by the

organizers, constitute four benchmarks: DB1, DB2,

DB3 and DB4. Each database contains 880

fingerprints for 110 fingers, each with 8 samples.

The image format is the TIF with 256 grey levels.

The images are uncompressed with a resolution of

about 500dpi. The image size varies depending on

the database. The orientation of fingerprint is

approximately in the range [-30°, +30°] with respect

to the vertical orientation.

Each fingerprint in the database is matched with

all the other fingerprints in the 4 different databases.

A matching is labelled correct if the matched pairs

are determined as identical fingers. The recognition

rate of FingerCode used on wavelet sub-images LL1

FINGERCODE FOR FINGERPRINT RECOGNITION IN WAVELET TRANSFORM DOMAIN

163

and LL2 are shown as Table 1. The recognition rate

in LL1 is higher than in LL2 over all the databases.

It can achieve 96.3% in the wavelet domain LL1

when we used the database DB1.

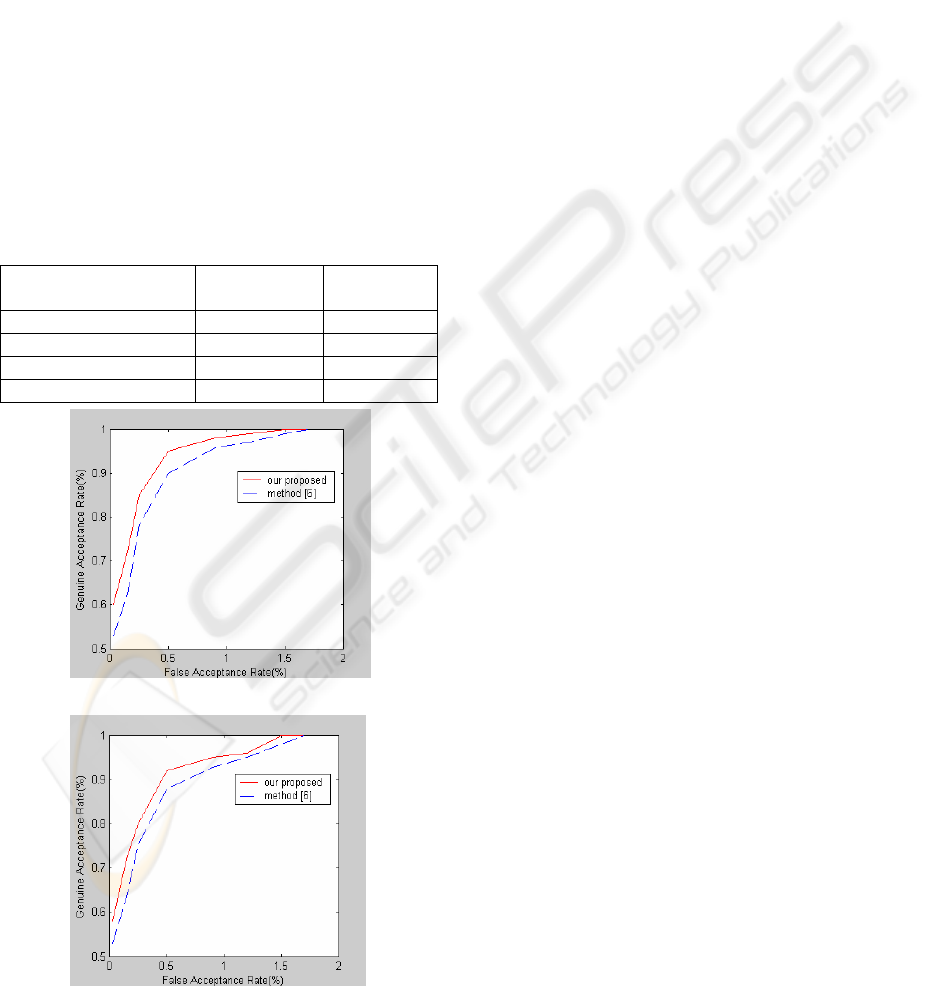

To compare the performance of the proposed

method with a typical method, a receiver operating

characteristic (ROC) is used. ROC is a plot of

Genuine Acceptance Rate (GAR) against False

Acceptance Rate (FAR). Fig.3. compares the ROCs

of the method based on FingerCode in ref (Sha, et

al., 2003) with the proposed algorithm on database

DB1 and DB2. Since the ROC curve of our

proposed algorithm is above the curve of method

(Sha, et al., 2003), we consider our algorithm

performs better than the method (Sha, et al., 2003)

on these databases. For example, at a 0.5% FAR, the

GAR of our proposed algorithm is 95.1%, while

minutiae-based 90.3% on database DB1.

Table1: Comparison of the recognition rate in the wavelet

domain LL1 and LL2 to different database DB1-4.

(a)

(b)

Fig.3. The ROC curve comparing the performance of the

proposed methods with method (Sha, et al., 2003) based

on (a) DB1, (b) DB2.

5 CONCLUSION

In this work, a novel method for fingerprint

recognition using Fingercodes in the wavelet

transform domain is proposed. One marked

advantages of our proposed method is many

conventional pre-processing such as smoothing,

binarization, thinning and restoration are not

necessary. Also, since the wavelet sub-band image

size for processing is reduced comparing to the

original image, the computational complexity is also

lowered. Above all, experimental results show that

the proposed method is outperforming the typical

Fingercode method in terms of accuracy and

reliability.

In addition, since the work is based on the

sophisticated FingerCode technology and the

reliable reference point detection algorithm in ref

(Sha, et al., 2003), the proposed recognition

algorithm can be robust to noise.

ACKNOWLEDGMENTS

This work is supported by Research Centre for

Advanced Image and Information Technology at

Chonbuk National University, Korea.

REFERENCES

Jang X. and Yau W.Y., 2000. in Proc. Int. Conf. on

Pattren Recognition(15th), Vol.2,pp. 1024-1045.

Liu J., Huang Z., and Chan K., 2000. in Proc. Int. Conf.

on Image Processing, Vol. 2, pp 427-430.

Jain, A.K., Prabhakar, S. Lin H., 1999. IEEE

Transactions on Pattern Analysis and Machine

Intelligence, Vol. 21, Issue 4, April, pp348 – 359.

Jain A.K., Prabhakar S., Lin H., Pankanti, S., 1999. IEEE

Conf. on Computer Vision and Pattern Recognition,

Vol. 2, pp23-25.

Jain A.K., Prabhakar S., Hong L., Pankanti S., 2000.

IEEE Transactions on Image Processing, vol9, no.5,

pp 846-859.

Sha L.F., Zhao F, Tang X.O., 2003. International

Conference on Image Processing, Vol. 2, pp:II-895-8,

vol.3.

Selvaraj H., Arivazhagan S., Ganesan L., 2003. Fifth

International Conference on Computational

Intelligence and Multimedia Applications, 27-30

pp430 – 435.

Database\wavelet

domain

LL1 LL2

DB1 96.3% 95.1%

DB2 95.2% 94.8%

DB3 94.7% 91.7%

DB4 95.4% 93.6%

VISAPP 2006 - IMAGE UNDERSTANDING

164

Tico M., kuosmanen P., and Saarien J., 2001. Electronics

Letters, vol.37, no.1, pp.21-22.

Tico M., Immonen E., Ramo P., Kuosmanen P., Saarinen

J., 2001. IEEE International Symposium on Circuits

and Systems, Vol. 2, pp 21 – 24.

Fung Y.H., Chan Y.H., 2004. “Fingerprint recognition

with improved wavelet domain features,” Proceedings

of Intelligent Multimedia, Video and Speech

Processing, pp: 33 – 36.

Lee W.K., chung J.H., 1997. IEEE International

Symposium on Circuits and Systems, vol.2, pp 1201 -

1204.

Mokju M., Abu-Bakar S.A.R., 2004. International

Conference on Computer Graphics, Imaging and

Visualization, pp149 – 152.

Mallat S., 1998, the book, Academic Press.

Maltoni D., et al., 2003, the book,Springer.

FINGERCODE FOR FINGERPRINT RECOGNITION IN WAVELET TRANSFORM DOMAIN

165